Collaborate in harmony: Orchestrate IT solutions

Logistics service providers maintain that their processes are unique and distinctive. After all, they know best what their customers need and align their business with their needs. But what does this mean for digitalisation? Should they use custom solutions for their IT? Or will standard products support their operations effectively? Given today's technologies, the answer to both questions is "Yes, depending on the circumstances".

Current interfaces and data interchange methods allow new approaches, such as:

- Integration of individual functions and services into an overall system

- Exchange of data across software solutions, hierarchical levels and companies

- Complete integration for everyone in the logistics chain

- Real-time communication among all stakeholders

- Comprehensive custom configuration of standard solutions while maintaining compatibility with future releases

Since there are hardly any technical constraints left, operational goals have become the main criteria for selecting an IT strategy.

The business model decides

Always get the right tool for the job – not just in the building industry, either. This rule of thumb helps companies quickly get their bearings in the software market. After all, freight forwarders who primarily transport general cargo, part load and full load shipments can find so many powerful transport management systems (TMSs) in the software market that they rarely need custom solutions. The story is very different for a heavy cargo forwarder that exports industrial equipment for Asian and North American machine manufacturers by air and sea. It has far fewer suitable solutions to choose from and is unlikely to find software that meets 90 percent of its needs right out of the box. It may far better investing the resources to develop a proprietary TMS.

Neither company will find a single application that supports everything they do in their entire logistics process. They have to consider interfaces and data transfer channels in order to identify the best approach for them. Today's software solutions can be intricately intertwined to enable digital order processing. Process models such as application programming interfaces (API) and event sourcing solutions support suitable data management for almost any application.

More flexibility: from FTP to API

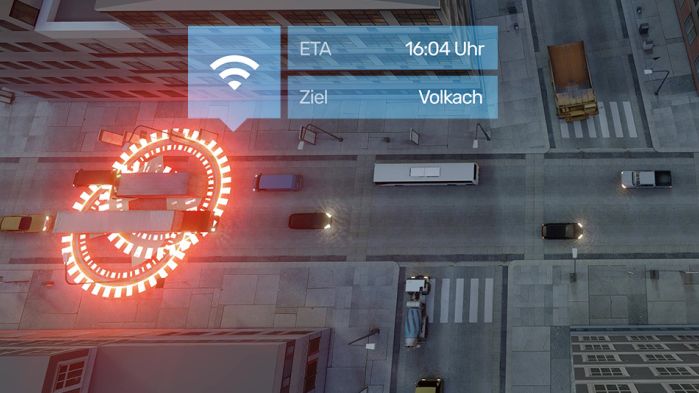

Data exchange methods have changed fundamentally in recent years as logistics networks have grown more complex. It makes little sense for logistics structures to send order data by FTP when they have to manage ever shorter planning cycles with just-in-time, just-in-sequence and warehouse-on-wheels approaches. Once sent, the data cannot be changed. In consumer terms, this would almost be like trying to coordinate a city trip with a group of friends using carrier pigeons instead of smartphones. These messages can only be corrected by resending modified copies. APIs, on the other hand, allow updates to be made at any time and shared with all stakeholders in real time. Data is exchanged synchronously between the systems: the sending system immediately receives a response from the recipient.

It can wait

However, some connections between systems don't require data to be exchanged in real time. Responses can sometimes be provided long after the request, especially in long-running processes. One example: a shipping order for small parts that have to be picked before being confirmed for transport loading. Event sourcing systems can transfer this data over a distributed streaming platform. They can cache live data streams. This structure works especially well for balancing loads when systems are scaled up because the event sourcing systems stabilise the data communications. They prevent the sender from overloading the receiver if the receiver processes data more slowly than it is sent. They provide answers as they become available. One practical example of this kind of structure is the status data sent from mobile devices used by parcel delivery services and general cargo freight forwarders: If the internet connection is lost while driving in cellular dead zones, the platform stores the data and sends it as soon as the device comes back online. Apache's open source platform, KAFKA, is one of the more established event sourcing solutions. It was originally developed for the message queue of online business network LinkedIn.

Custom solutions off the shelf

If you combine the benefits of APIs and streaming platforms, it is possible to cleverly combine TMSs and microservices for subtasks in the logistics chain. This comes very close to custom solutions. This means that the IT departments of logistics service providers do not even have to invent special solutions for special tasks. Instead, they use the most established detailed solutions for each individual process based on a best-of-breed approach. However, that assumes they can perfectly orchestrate all the services they need via suitable interfaces.

How well do your IT solutions work together?